We recently moved our production service to the new Azure Kubernetes Service (AKS) from Microsoft. AKS is a managed Kubernetes (K8s) offering from Microsoft, which in this case, means Microsoft manage part of the cluster for you. With AKS you pay for your worker nodes, not your master nodes (and thus control plane) which is nice.

Don’t know what Kubernetes is?

Kubernetes is an open-source system for automating deployment, scaling, and management of containerized applications. — https://kubernetes.io/

Why did we move

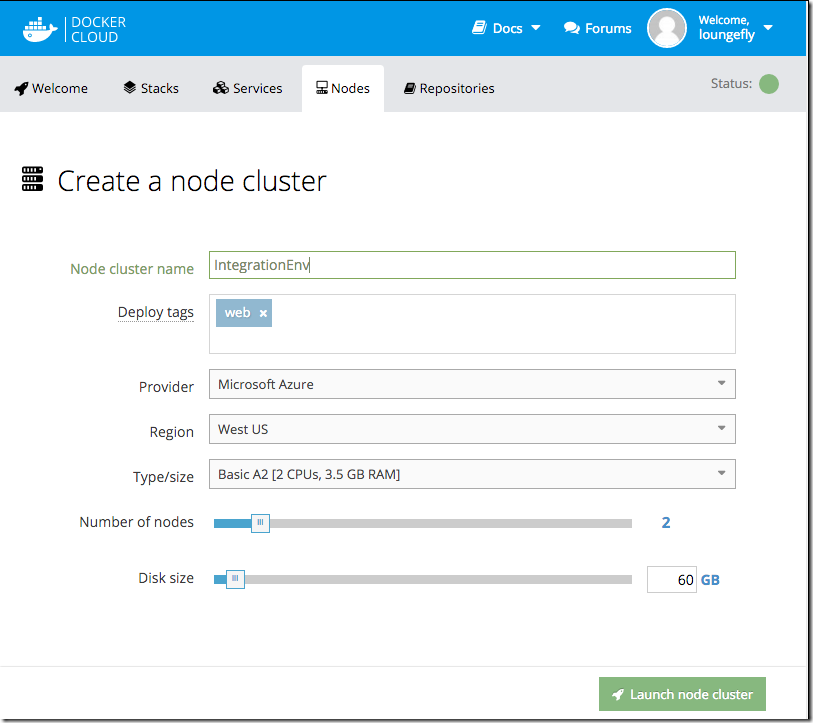

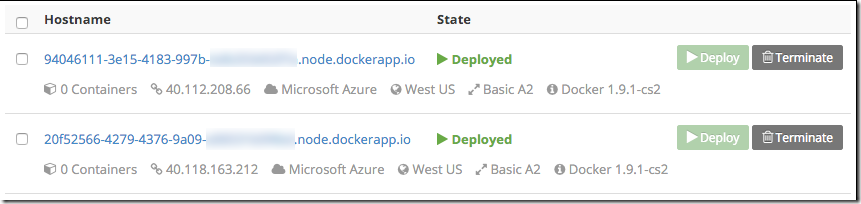

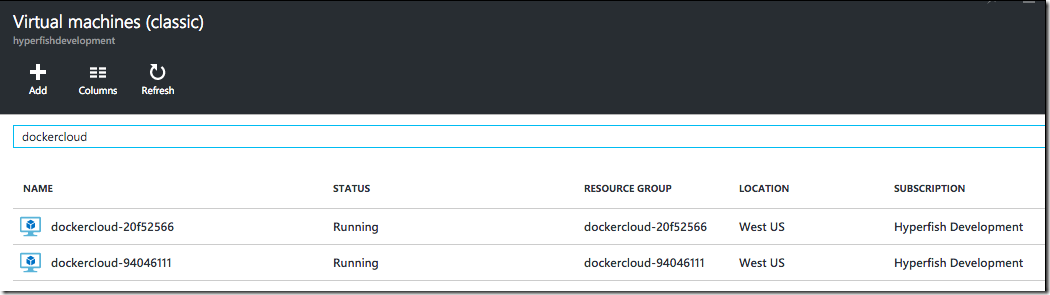

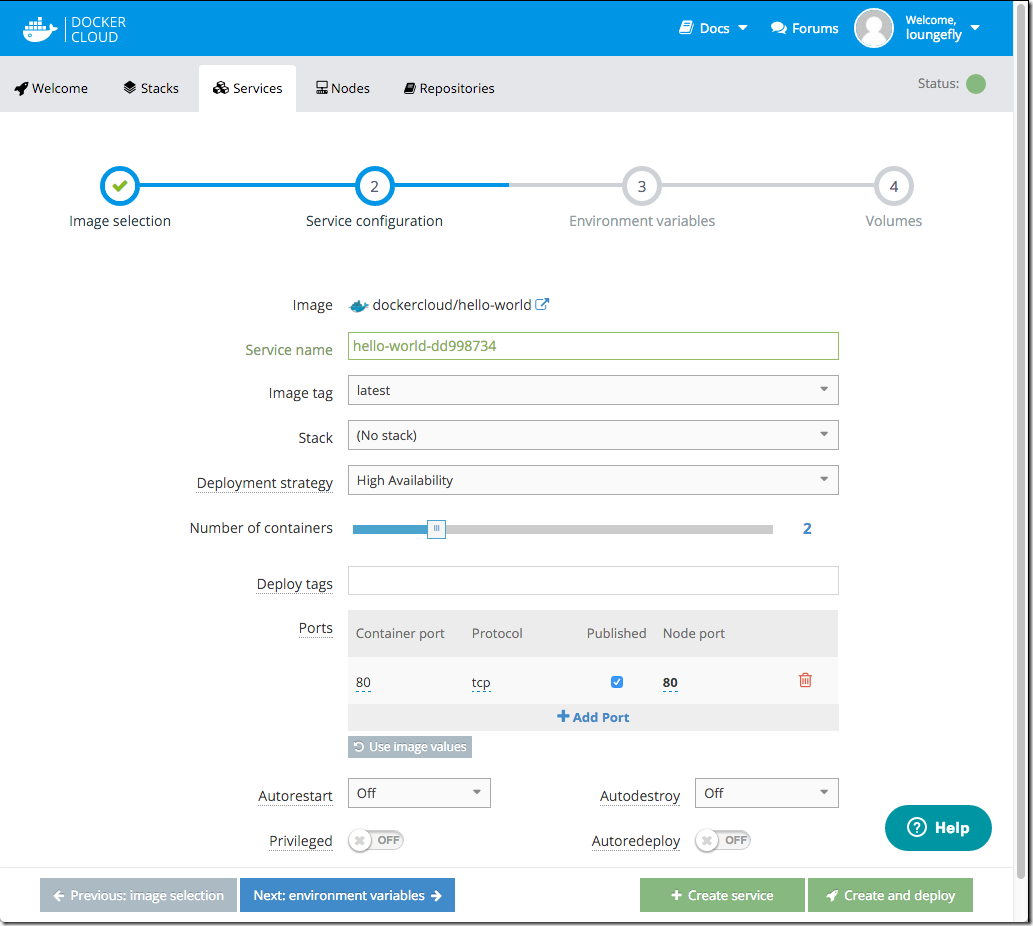

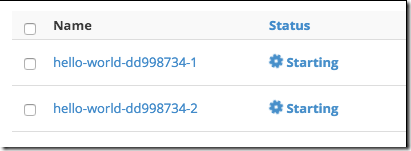

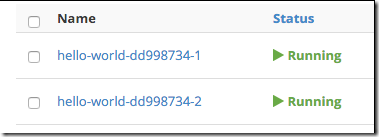

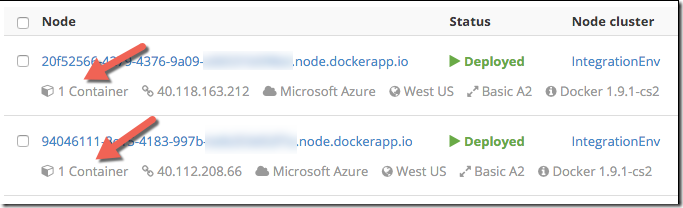

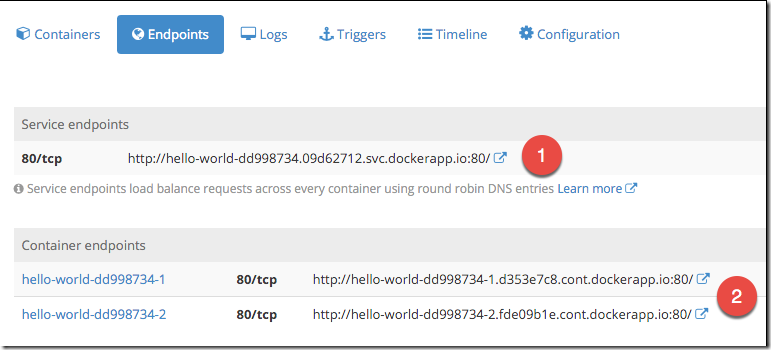

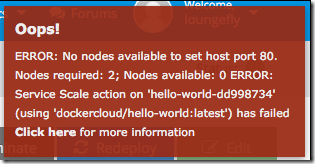

The short story is that we were forced to evaluate our options as the current orchestration software we were using to run our service was shutting down. Docker Cloud was a sass offering that offered capabilities around orchestration/management or our docker container based service. This meant we used it for deployment of new containers, rolling out updates, scheduling those containers on various nodes (VMs) and keeping an eye on it all. It was a very innovative offering 2 years ago when we started using it, was cloud based, easy to use and price competitive. Anyway, Docker with their new focus on making money the Enterprise decided to retire the product and we were forced to look elsewhere.

Kubernetes was the obvious choice. It’s momentum in the industry means there are a plethora of tools, guidance, community and offerings around it. Our service was already being run in Docker containers and so didn’t require significant changes to run in Kubernetes. Our service is comprised of ~20 or so “services” e.g. frontend, API. Kubernetes helps you run these services. It offers things like scheduling those containers to run, managing spinning up new ones if they stop etc.

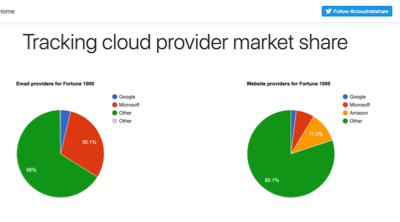

Every major cloud provider has a Kubernetes offering now. Googles GKE has been around since as early as Nov 2014 & Amazon’s AWS recently released their EKS (on June 2018).

Choosing AKS

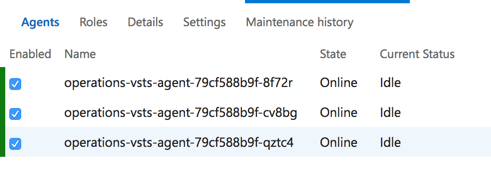

We are not a big team and we couldn’t afford to have a dedicated team to run our orchestration and management platform. We needed an offering that was run by Kubernetes experts who know the nitty gritty of running K8s. The team building AKS at Microsoft are those people. Lead by the co-founder of the Kubernetes project Brendan Burns the MS team know their stuff. It was compelling that they were looking at new approaches in the managed K8s space like not charging for the control plan/master nodes in a cluster and were set on having it just be vanilla K8s and not a weird fork with proprietary peculiarities.

Summary of reasons (in no particular order):

- Azure based. Our customers expect the security and trust that Microsoft offers. Plus we were already in Azure.

- Managed offering. We didn’t want to have to run the cluster plumbing.

- Cost. Solid price performance without master node costs.

- Support. Backed by a team that know their stuff and offer support (more on this below).

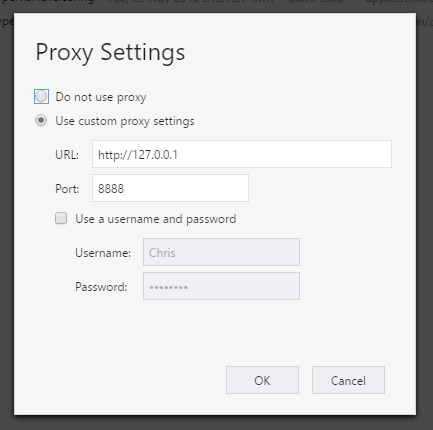

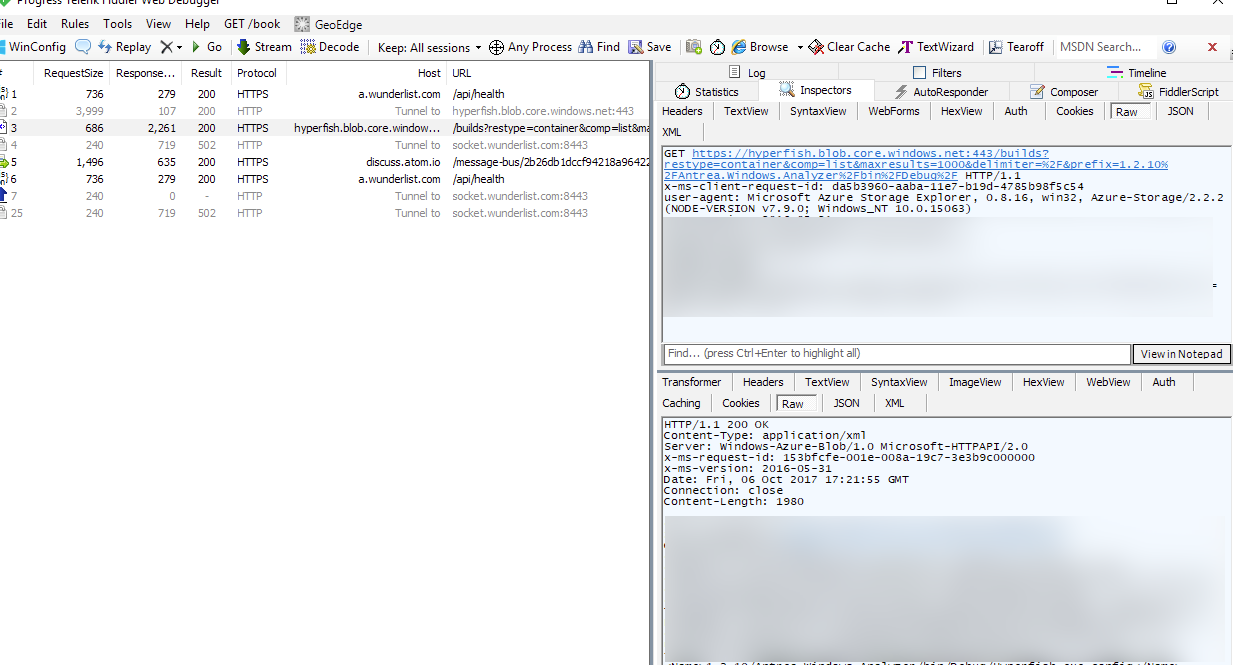

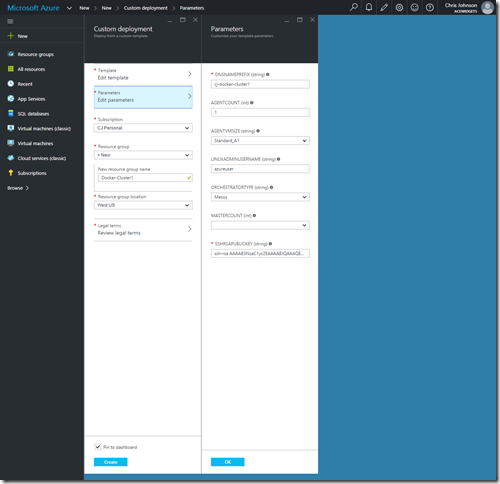

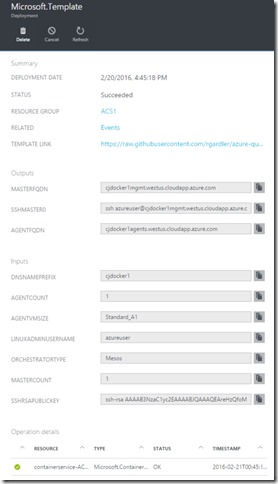

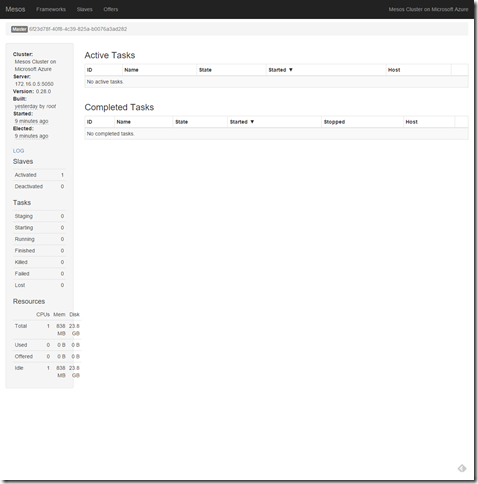

AKS is relatively new and at the time we started considering our options for the move AKS was not a generally available service. We didn’t know when it would GA either. This pained me to say the least, but we had a hunch it was coming soon. To mitigate this we investigated acs-engine which is a tool that AKS uses behind the scenes to generate ARM templates for Azure to stand up a K8s cluster. Our backup plan was to run our own K8s cluster for a while until AKS went GA. Fortunately we didn’t need to do this 🙂

Moving to Kubernetes

We were in the fortunate position that our service was already running in a set of Docker containers. Tweaking these to run with Kubernetes only required minor changes. Those were all focused on supplying configuration to the containers. We used a naming convention for environment variables that wasn’t Kubernetes friendly, so we needed to tweak the way we read that configuration in our containers.

The major work required was in defining the “structure” of our application in Kubernetes configuration files. These files define the components in your application, the configuration of them, how they can be communicated with & resources they need. These files are just YAML files however manually building them can be tedious when you have quite a few to do. Also there can be repetition between them and keeping them in sync can be painful.

This is where Helm comes in.

Helm is the “package manager for Kubernetes” … but I prefer to think of it as a tool that helps you build templates (“charts”) of your application.

In Azure speak they are like ARM templates for your application definition.

The great part about Helm is that it separates the definition of your application and the environmental specifics. For example, you build a chart for your application that might contain a definition for your frontend app and an API, what resources they need and the configuration they get e.g. DB connection string. But you don’t have to bake the connection string into your chart. That is supplied in an environment specific values file. This lets you define your application and then create environment specific values files for each environment you will deploy your application to e.g. test, stage, production etc. You can manage your chart in source control etc. and manage your environment specific values files (with secrets etc.) outside of source control in a safe location.

This means we can deploy our service on a developer laptop, AKS cluster in Azure for test or into Production using the exact same definition, but with different environment specific configuration.

Chart + Environment config == Full definition.

We already had a Docker Compose definition of our service that our engineers used to run the stack locally during development. We took this and translated it into a Helm chart. This took a bit of work as we were learning along the way, but the result is excellent. One definition of our service in a declarative, modular and configurable way that we use across development, test environments and production.

Helm charts are already available for loads of different applications too. This means if you want to run apps like redis, postgres or zookeeper you don’t have to build your own helm charts.

That’s a lot of words … what’s the pay off Chris?

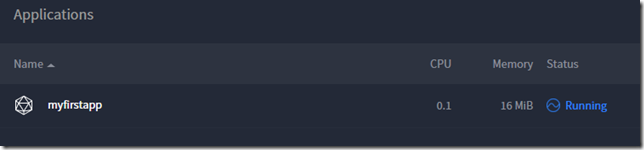

The best way I can demonstrate in a blog post the value all this brings is to show you how simple it is to deploy our application.

Here are the CLI steps for deploying a brand new, fully functional 4 node environment in Azure with AKS + Helm for our application

az aks create –resource-group myCluster –name myCluster –admin-username cjadmin –dns-name-prefix appName –node-count 4

helm upgrade myApp . -f values.yaml -f values.dev.yaml –install

Two commands to a fully functional environment, running on a 4 node K8s cluster in Azure. Not bad at all!! It takes us about 10 mins to spin up depending on how fast Azure is at provisioning nodes.

What didn’t go well

Of course there were things that didn’t go perfectly along with all this too. Not specifically AKS related, but Azure related. One in particular that really pissed me off 🙂 During the production move we needed to move some static IP addresses around in our production environment. We started the move and it seemingly failed part the way through the move. This left our resource group in Azure locked for ~4 hours!! During this time we couldn’t update, edit or add anything to our resource group. We logged a Severity A support ticket with MS which is supposed to have a 1 hour response time … over 3 hours later we were still waiting. We couldn’t wait and needed to take mitigation steps which included spinning up a totally new and separate environment (easy with AKS!) and doing some hacky things with VMs and HAProxy to get traffic proxied correctly to it. Some of our customers whitelist our static IP addresses in their firewalls so we don’t have the luxury of a simple DNS change to point at a new environment. It was disappointing to say the least that we pay for a support contract from MS but Azure failed and more importantly our support with MS failed and left us high and dry for 4 hours. PS: they still don’t know what happened, but are investigating it.

Summary

Docker closing it’s Docker Cloud offering was the motivation we needed to evaluate Kubernetes and other services. It left me with a bad taste in my mouth with Docker as a company and I will find it hard to recommend or trust taking a dependency on a product offering from them. Deprecating a SaaS product with 2 months notice is not the right way to operate a business if you are interested in keeping customers IMHO. But nevertheless a good thing for us ultimately!

Our experience moving to AKS has been nothing short of excellent. We are very glad the timing of AKS worked in our favor and that Microsoft have a great offering that meets our needs. It’s still early days with AKS and time will be the ultimate proof, however as of today we are very happy with the move.

If you are new to container based applications and are from a Microsoft development background I recommend checking out a short tutorial on ASP.Net + Docker. I have thoroughly enjoyed building a SaaS service that serves millions of users in a container based model and think many of the benefits it offers are worth considering for new projects.

If you want to learn Kubernetes in Azure I recommend their getting started tutorial. It’s will give you a basic understanding of K8s and how AKS works.

Try out the tutorial on AKS + Helm for deploying applications to get started on your journey to loving Helm.

Finally … I interviewed Gabe Monroy from the AKS team when I was at Build 2018 for the Microsoft Cloud show if you are interested in hearing more about AKS, the team behind it and Microsoft’s motivations for building it!

-CJ

Many years back a small group of friends started the Office 365 developer slack network. A bunch of people joined and then it rapidly went nowhere

Many years back a small group of friends started the Office 365 developer slack network. A bunch of people joined and then it rapidly went nowhere